Problem:

When a machine learning system is being trained through the user's repeated inputs, the user wants to know that this is the case.

Solution:

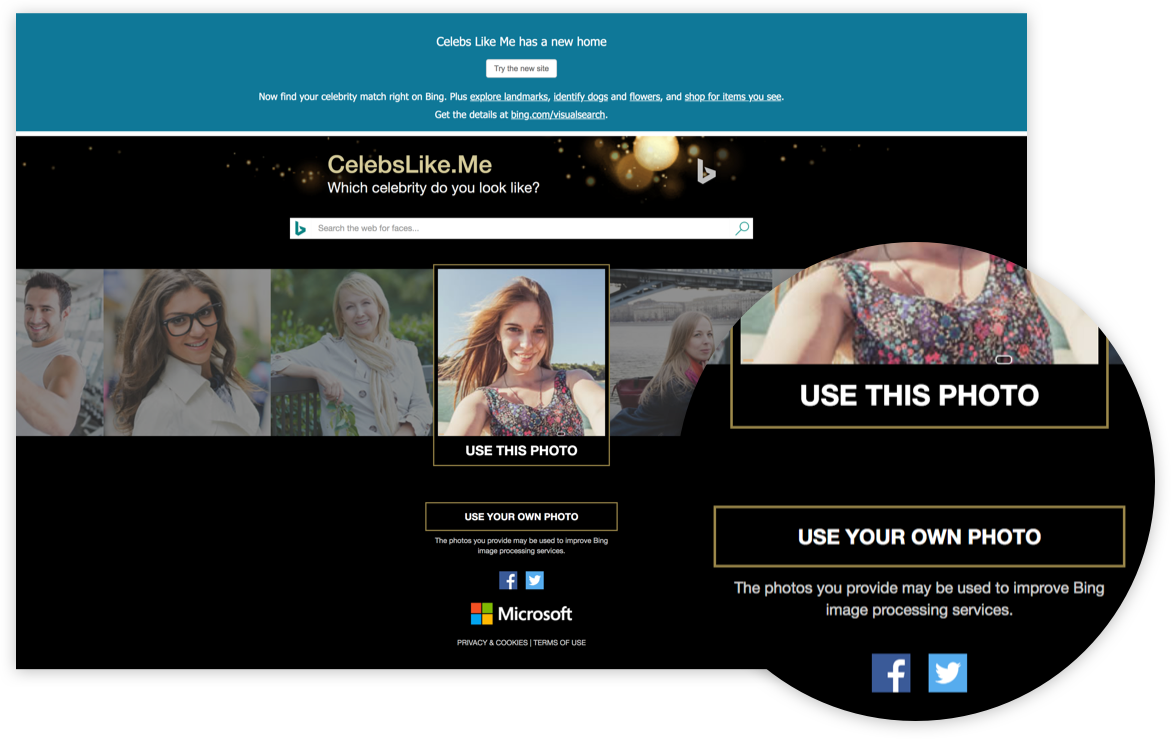

The system tells the user when it's taking inputs for training purposes, especially if those inputs are unrelated to the user's intentions.

Discussion:

When the user has a long term relationship with a machine learning software, one in which the software shows an improvement over time, it's important to establish the expectations around improvement up front. It's also useful for mitigating some of the negative impressions that can be formed if the AI is still "in training" and imprecise, acknowledging inaccuracy in a way that makes it clear that improvement can be expected. This also goes to the heart of issues around transparency— if the system is capturing data from the user, then it should communicate why such data is needed. This pattern is especially relevant for chatbots and other conversational UIs, where the user may be involved in a long term, conversation-based relationship with the system.