Problem:

When the AI acts on variables that are inputted by the user, the user wants to compare what they originally inputted to the resulting output, in order to better understand how they can achieve the output they desire.

Solution:

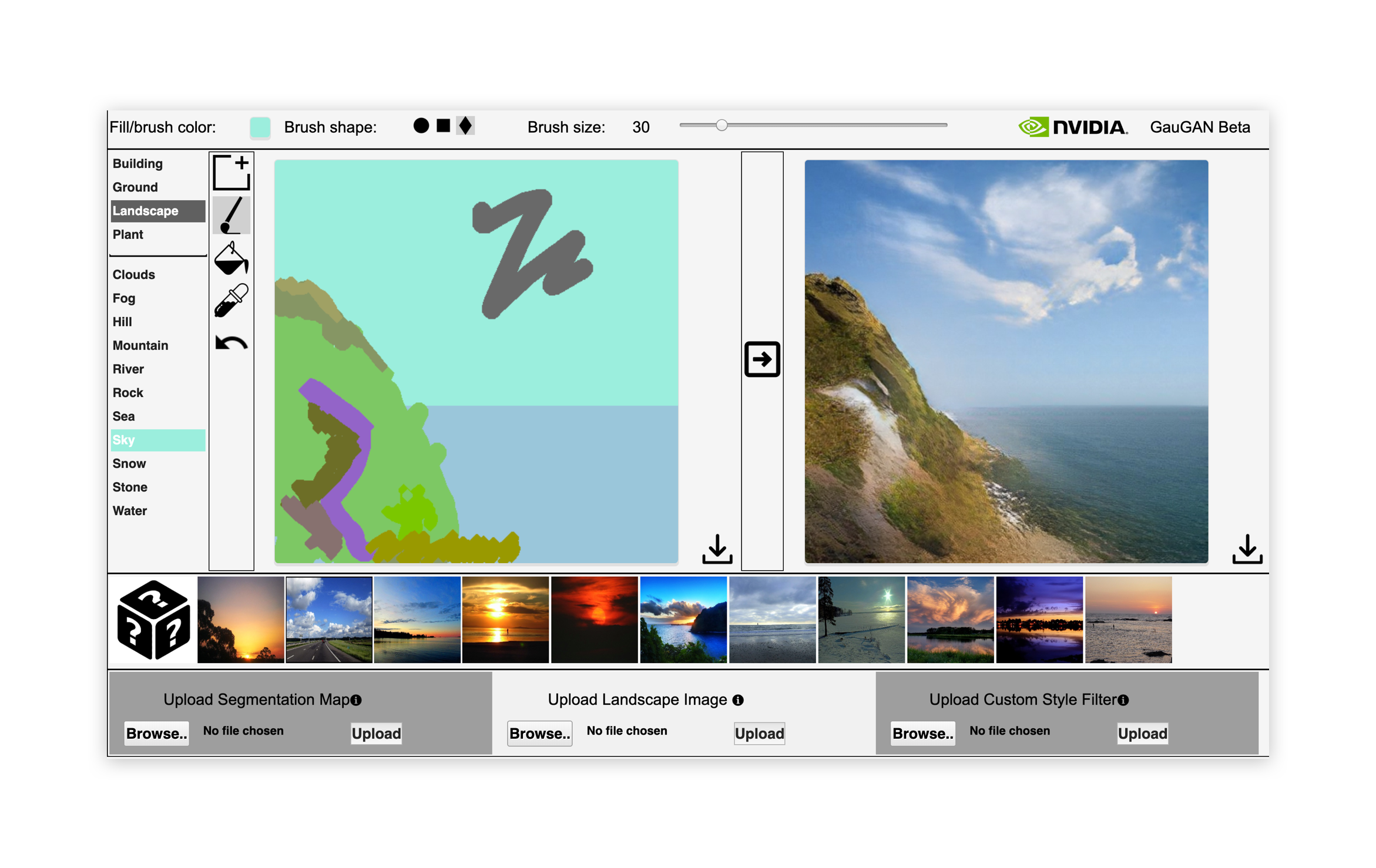

The system presents a summary of the user's input next to the resulting output. As the output is already translating the user’s input into something new, the input feedback itself should closely resemble the user’s input in format, e.g. if they have entered data as variables to be converted into graphics, the input feedback should be variables (not graphics).

Discussion:

This pattern assumes that the user is submitting data for processing and waiting on a response — obviously in systems where controls directly affect the output via real time tweaking, as long as those controls are visible (e.g. are not automatically hidden in a collapsed panel once applied), they suffice as input feedback.

In more conventional, non-AI applications, it can be redundant to replay the user’s input to them, as their mental model of cause and effect in such cases is strong and they fully grasp how their input relates to output. In AI operations however, the AI can act in unanticipated ways where it is harder for the user to understand what control they have on it, and to know how to guide it towards their desired results. Keeping the input in the same context as the output allows the user to use trial and error exploration over repeated operations to develop their understanding of the system.

A similar pattern is Before & After Comparison, and in practice there may be some crossover between these two. That said, there is an important distinction — Before & After Comparison allows a user to inspect two objects to extrapolate the effect of the AI on the processed version and validate its success, whereas Input & Output Comparison is more focused on allowing the user to establish a sense of control over the operation by building a picture of cause and effect.