Problem:

When an algorithm processes the user’s bodily position in real time via pattern recognition, the user wants to understand what the system sees and how they can affect that.

Solution:

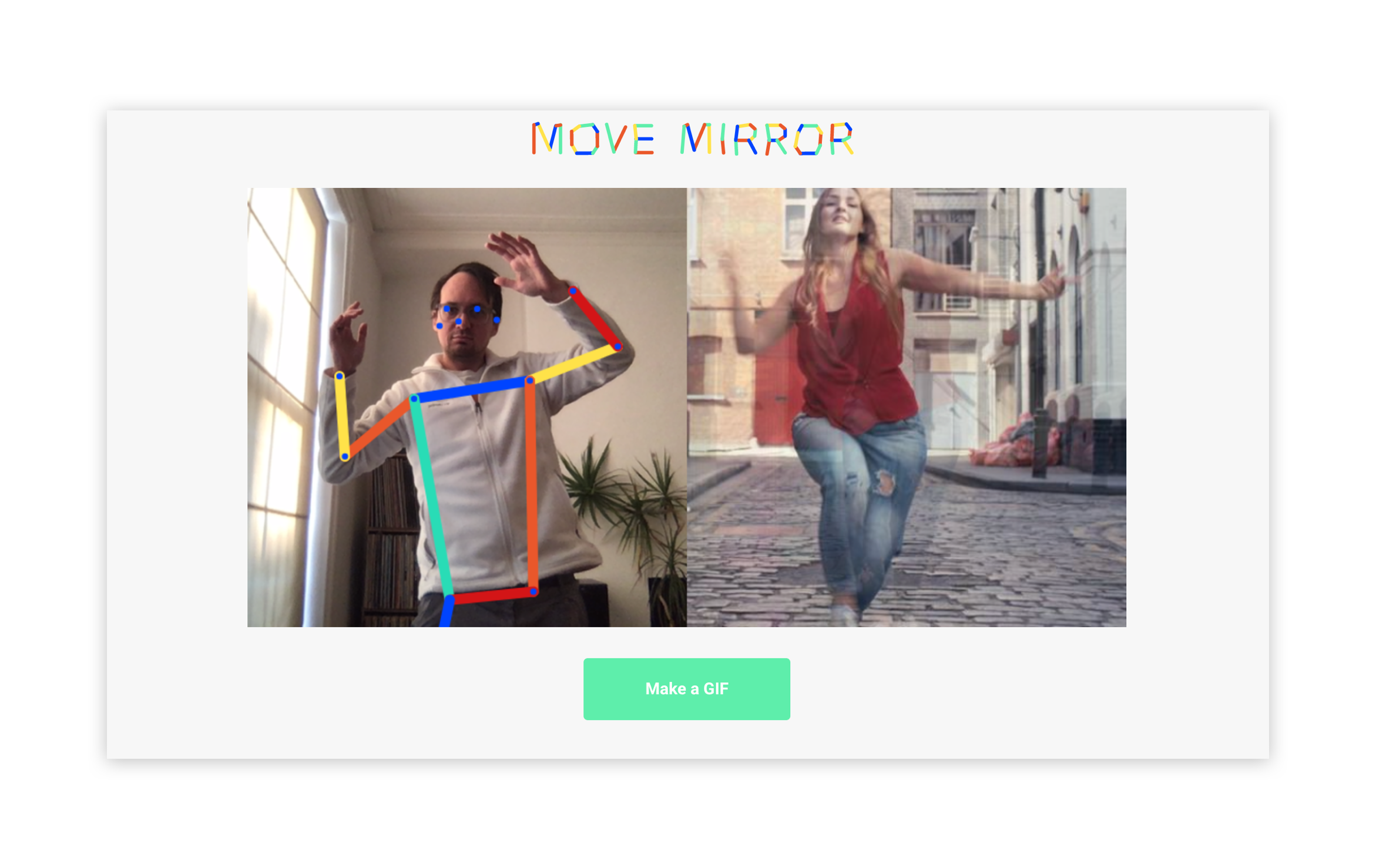

In an overlay over the video capture, the system shows a simplified representation of the points on the body that it is tracking, often connected in a wireframe model of lines and nodes. This offers an intermediate abstraction between the user’s body and the underlying numerical variables that are actually used by the system.

Discussion:

For many AI systems, the key usability challenge is in how the user builds their mental model of what the system is doing. This is especially relevant when the system is capturing something concrete and intuitive from the user (e.g. the position of their body and limbs) and converting that input into intangible datapoint to process it or generate new outputs from it. While the intermediary visual neither really represents how the user thinks about their body nor really manifests the abstraction of variables under the surface of the software, it is an effective vehicle for communicating the translation of body to data that is happening in that moment.