Problem:

Users want to know how much trust to place in the system's intelligence, especially in critical applications such as the diagnosis of health issues, where there can be real world implications of following bad advice.

Solution:

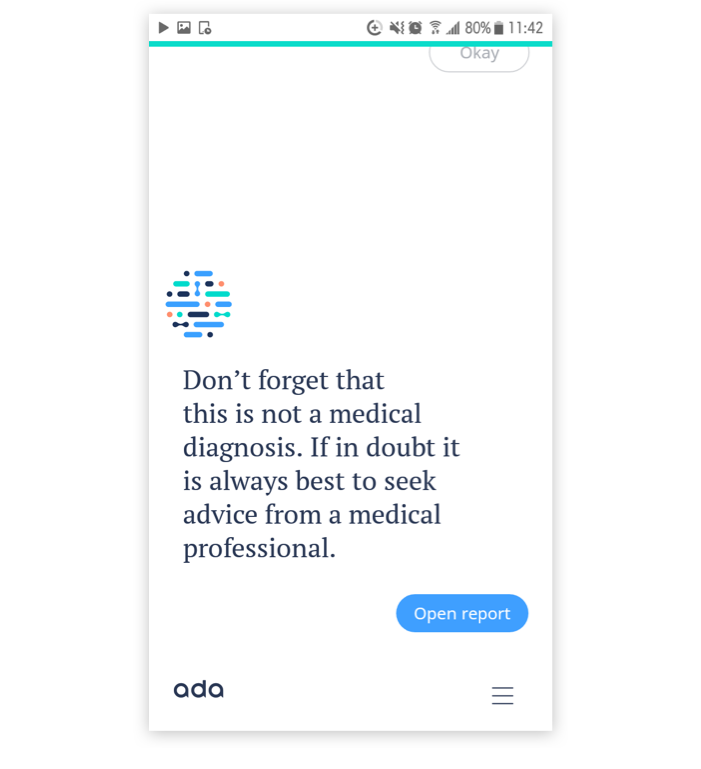

In combination with Setting Expectations & Acknowledging Limitations, the system alerts the users of the risks they may encounter in following its advice, and how to mitigate that risk (i.e. to seek further advice from an expert before proceeding with any action).

Discussion:

While many faulty AI-driven predictions will result in little more than an ill-fitting dress or unliked movie, some are far more impactful. Great care should be taken in addressing the risks to the user, both because there can be potential legal and commercial implications, and because there is a moral imperative to reduce potential harm where possible.