Problem

Users with vision impairments want to understand what image content exists in the interface. The system designers want to facilitate this without manually tagging alt text for every image.

Solution:

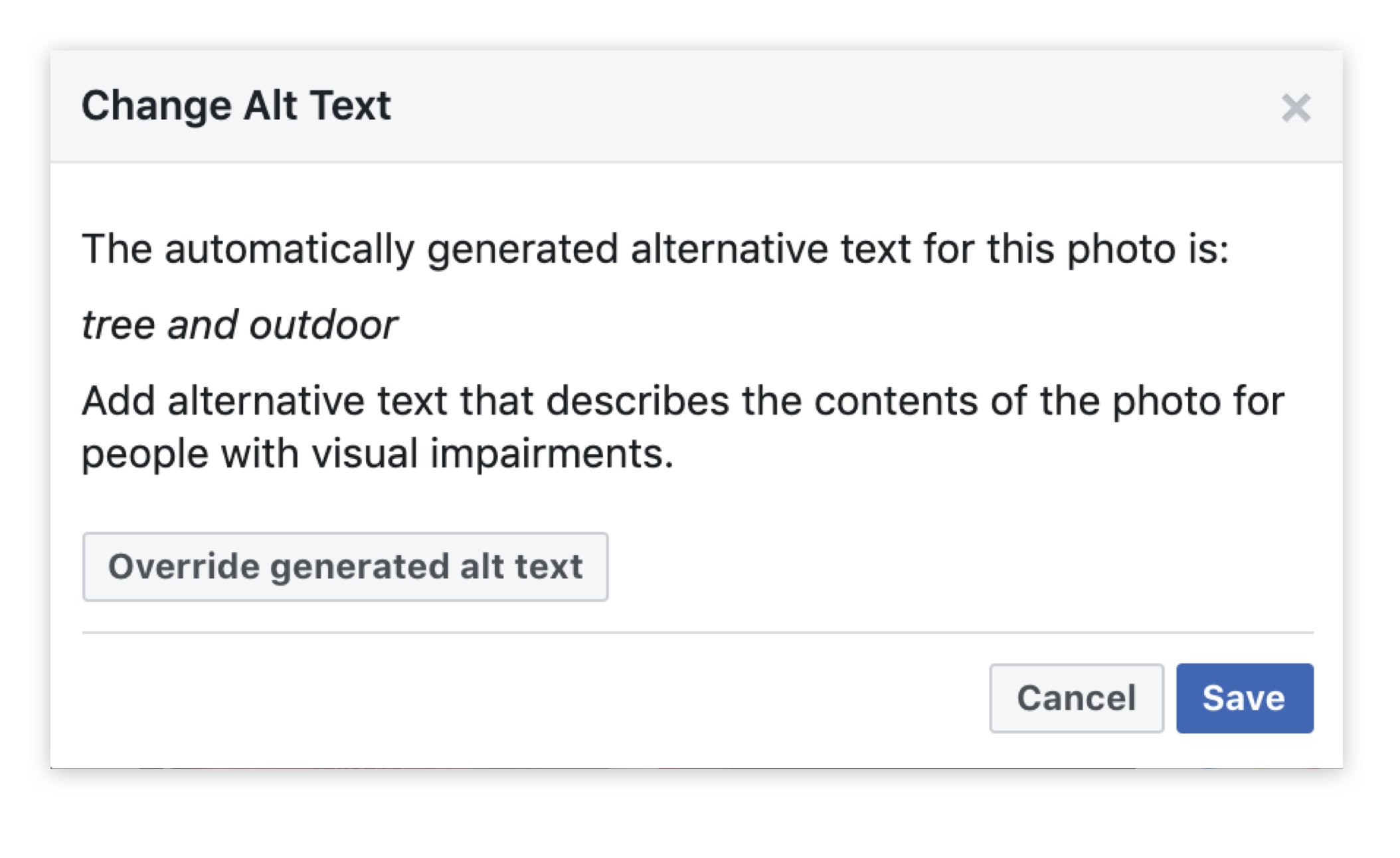

Image recognition automatically tags images with alt text based on what the AI identifies in that image.

Discussion:

While the majority of patterns catalogued in this library are focused on the benefits to the user, in some cases, the value of AI may not be recognized by the end user but rather by those responsible for designing and maintaining the system. In this case, the primary users of alt text will care little how it is generated, as long as exists. Designers, on the other hand, should use patterns like this to broaden our ideas as to where data can be sourced from and ask which is the best approach in each case: from the initial uploader or content manager, crowdsourced across users, or generated by the system itself?

Each has advantages as per the accuracy, authority, breadth and depth of the data created, but the AI approach is unique in terms of requiring the least human effort. That said, it doesn’t pay to be one hundred percent hands-off— automated content tags of any kind benefit from an additional layer of human intervention, where end users can ad-hoc moderate the data by flagging anything they believe is erroneous or offensive.