Problem:

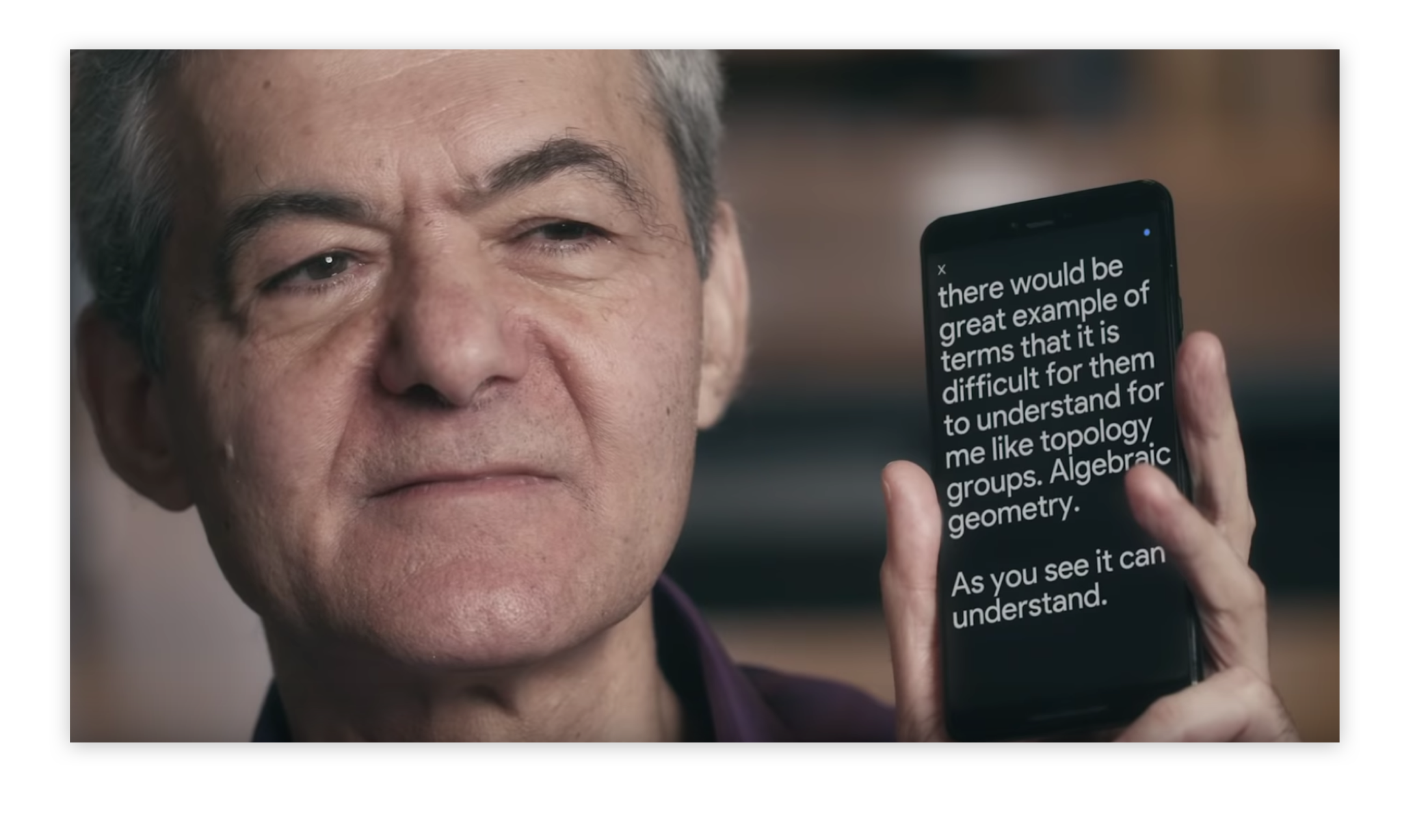

Users with speech impairments would like to use voice interfaces, but the software often fails to recognize their speech.

Solution:

The AI that powers an inclusive voice interface is trained with voices from users with speech impairments so that it can understand their inputs. This is achieved to a baseline level through collective training, in addition to training for each individual so as to optimize the system for their personal speech patterns.

Discussion:

Accessibility issues are well understood when it comes to traditional web applications, and the need for interfaces that are accessible to users with vision loss or physical impairments, for example, is widely acknowledged (even if fully accessible UIs are not provided as widely as they should be). By comparison, it can be easy to overlook the issues that users may have with new emergent interfaces, especially those that rely on AI or ML. Unlike the lack of alt text for screen readers, for instance, there are few indications to the able-bodied tester that the interface will fail for some users. Voice interfaces are a very useful case study in that regard — it is only through inclusive interfaces like Voiceitt or Google’s Project Euphonia, that we are made aware of how inadequate our standard provision may be.

Going further, we should not just consider how these projects can make ordinary software accessible to all users, we should embrace opportunities to leverage the unique properties of these emergent interfaces in order significantly improve the quality of life for people with speech impairments, such as those with cerebral palsy, amyotrophic lateral sclerosis (ALS), Parkinson’s Disease, brain cancer, or traumatic brain injury.